Non-commutative algebras,

computer algebra systems,

and theorem provers

Eric Wieser (efw27@)

Signal Processing Group

Non-commutative algebras

Non-commutative algebras Geometric motivation

Geometry is inherently non-commutative:

- applying 3D rotations in a different order gives a different result

- the oriented plane spanning $u$ and $v$ faces the opposite way to the one spanning $v$ and $u$

… algebraic representations of geometry are too:

- for rotation matrices, composition is multiplication, and $R_1R_2 \ne R_2R_1$ in general

- the normal vector of such a plane (in 3D) can be found with the cross product, and $u\times v = -v\times u$.

The matrix algebra $\mathbb{R}^{n \times n}$ is the typical choice for representing geometry, but is by no means the only choice available:

Quaternions

$\mathbb{H}$

Exterior algebra

$\bigwedge(V)$

Geometric algebra

$\mathcal{G}(Q)$

Non-commutative algebras Geometric examples

Quaternions, $\mathbb{H}$

Like the complex numbers, $q = r + x\mathrm{i} + y\mathrm{j} + z\mathrm{k}$, but with $i^2 = j^2 = k^2 = ijk = −1$.

In 3D, a rotation around the axis $u$ by $\theta$ can be represented as:

$$e^{\frac{\theta}{2}{(u_x\mathrm{i} + u_y\mathrm{j} + u_z\mathrm{k})}} = \cos \frac{\theta}{2} + (u_x\mathrm{i} + u_y\mathrm{j} + u_z\mathrm{k}) \sin \frac{\theta}{2}$$Applying a quaternion rotation is done as $qvq^{-1}$.

Every (normed) quaternion represents a rotation; typically a better choice than rotation matrices which can become non-orthonormal.

A division ring, unlike the matrix algebra; all non-zero quaternions have an inverse.

Exterior algebra, $\bigwedge(V)$

Geometric algebra, $\mathcal{G}(Q)$

Non-commutative algebras Geometric examples

Quaternions, $\mathbb{H}$

Exterior algebra, $\bigwedge(V)$

For an arbitrary vector space $V$ containing $u, v$, $u \wedge v = -v \wedge u$ represents an oriented plane at the origin spanning $u$ then $v$.

Unlike the cross product, generalizes to higher dimensions: $u \wedge v \wedge w$ is an oriented volume.

Geometric algebra, $\mathcal{G}(Q)$

An extension of exterior algebra that includes a metric $Q$, which result in a dot product.

Multiplication of vectors satisfies:

$$uv = u\cdot v + u \wedge v$$Non-commutative algebrasWeaker algebraic structures

- $ab \ne ba$; non-commutativity

-

As we saw, this is particularly easy to motivate through geometry. This paves the way to further

weakening

of our algebraic rules. - $a \ne 0, b \ne 0, ab = 0$; zero divisors

-

$\mathbb{R}^{n \times n}$ and $\bigwedge(B)$ and $\mathcal{G}(Q)$ all have this property.

This means $ab = ac \centernot\implies b = c$ even if $a \ne 0$!

- $a(bc) \ne (ab)c$; non-associativity

-

A simple example is the vector cross product:

$$u \times (v \times w) = (u \times v) \times w + v \times (u \times w)$$More examples: Lie algebras, Octonions, …

Forgetting these weaknesses leads to algebraic mistakes.

Can we software help us keep track of them?

Computer algebra systems

A computer algebra system (CAS) or symbolic algebra system (SAS) is any mathematical software with the ability to manipulate mathematical expressions in a way similar to the traditional manual computations of mathematicians and scientists.Computer algebra system Wikipedia

Useful for

- Roughly checking your work

- Answering what-if questions experimentally

- Solving large but tedious equations quickly

- …

Typical features

- Algebraic simplification

- Root finding

- Integration and differentiation

- Arbitrary precision calculations

- …

Notable implementations

(1965, BSD)

(1982, GPL)

(1982, Proprietary)

(1993, Proprietary)

(1998, Proprietary)

(2004, GPL)

Sympy

SymPy is a Python library for symbolic mathematics. It aims to become a full-featured computer algebra system (CAS) while keeping the code as simple as possible in order to be comprehensible and easily extensible. SymPy is written entirely in Python.

Why wasn't this on the previous slide?

Sage[Math] tries to gather together all the major open source mathematics software, and glue it together into a useful system. In fact, Sage[Math] includes SymPy as one of its systems.

What is the difference between SymPy and Sage[Math]? Aaron Meurer (Lead SymPy developer)

Sympy Examples

Solve $x^2 - 2 = 0$

>>> solve(x**2 - 2, x) $\left[ - \sqrt{2}, \ \sqrt{2}\right]$Take the derivative of $\sin{(x)}e^x$

>>> diff(sin(x)*exp(x), x) $e^{x} \sin{\left(x \right)} + e^{x} \cos{\left(x \right)}$Compute $\int_{-\infty}^\infty \sin{(x^2)}\,dx$

>>> integrate(exp(x)*sin(x) + exp(x)*cos(x), x) $e^{x} \sin{\left(x \right)}$Solve the differential equation $y'' - y = e^t$

>>> y = Function('y') >>> dsolve(Eq(y(t).diff(t, t) - y(t), exp(t)), y(t)) $y{\left(t \right)} = C_{2} e^{- t} + \left(C_{1} + \frac{t}{2}\right) e^{t}$The Power of Symbolic Computation SymPy.org

SympyExpression trees

>>> from sympy import *; from this_presentation import show_repr

Real numbers

>>> x, y = symbols('x y', real=True)

>>> expr = (x + y)*(x - y); expr

$(x - y)(x + y)$

>>> show_repr(expr)

Mul(Add($x$, Mul(Integer(-1), $y$)),

Add($x$, $y$))

Complex numbers

>>> x, y = symbols('x y', complex=True)

>>> expr = (x + y)*(x - y); expr

$(x - y)(x + y)$

>>> show_repr(expr)

Mul(Add($x$, Mul(Integer(-1), $y$)),

Add($x$, $y$))

Underlying representations

- Real

Symbol,Add,Mul, …- Complex

Symbol,Add,Mul, …- Matrix

MatrixSymbol,MatAdd,MatMul, …- Quaternions?

Matrices

>>> m, n = symbols('m n')

>>> X = MatrixSymbol('X', m, n)

>>> Y = MatrixSymbol('Y', m, n)

>>> expr = (X + Y)*(X - Y).T; expr

$(X + Y)(X^T - Y^T)$

>>> show_repr(expr)

MatMul(

MatAdd($X$, $Y$),

MatAdd(

Transpose($X$),

MatMul(Integer(-1), Transpose($Y$))))

SympyExpression trees

Quaternions

No symbolic quaternions; only quaternions with symbolic coefficients.

>>> x, xi, xj, xk = symbols('x x_i x_j x_k'); xq = Quaternion(x, xi, xj, xk)

>>> y, yi, yj, yk = symbols('y y_i y_j y_k'); yq = Quaternion(y, yi, yj, yk)

>>> expr = xq * yq; expr

$\left(x y - x_{i} y_{i} - x_{j} y_{j} - x_{k} y_{k}\right) + \left(x y_{i} + x_{i} y + x_{j} y_{k} - x_{k} y_{j}\right) i$

$\quad+ \left(x y_{j} - x_{i} y_{k} + x_{j} y + x_{k} y_{i}\right) j + \left(x y_{k} + x_{i} y_{j} - x_{j} y_{i} + x_{k} y\right)$

>>> show_repr(expr)

Quaternion(Add(Mul($x$, $y$), Mul(Integer(-1), $x_i$, $y_i$), Mul(Integer(-1), $x_j$, $y_j$), Mul(Integer(-1), $x_k$, $y_k$)),

Add(Mul($x$, $y_i$), Mul($x_i$, $y$), Mul($x_j$, $y_k$), Mul(Integer(-1), $x_k$, $y_j$)),

Add(Mul($x$, $y_j$), Mul(Integer(-1), $x_i$, $y_k$), Mul($x_j$, $y$), Mul($x_k$, $y_i$)),

Add(Mul($x$, $y_k$), Mul($x_i$, $y_j$), Mul(Integer(-1), $x_j$, $y_i$), Mul($x_k$, $y$)))

This makes quaternions hard to work with:

$x_qx_qx_q^{-1}y_q = x_qy_q$

>>> (xq * xq * xq**-1 * yq).simplify()

$\left(x y - x_{i} y_{i} - x_{j} y_{j} - x_{k} y_{k}\right) + \left(x y_{i} + x_{i} y + x_{j} y_{k} - x_{k} y_{j}\right) i$

$\qquad + \left(x y_{j} - x_{i} y_{k} + x_{j} y + x_{k} y_{i}\right) j + \left(x y_{k} + x_{i} y_{j} - x_{j} y_{i} + x_{k} y\right) k$

$\nabla_{\!x_q}(x_qy_q) = -2y_q$

>>> def q_diff(Φ, dq): # similar to ∇Φ

... ℍ = Quaternion

... I, J, K = ℍ(0,1,0,0), ℍ(0,0,1,0), ℍ(0,0,0,1)

... return (Φ.diff(dq.a) + I*Φ.diff(dq.b)

... + J*Φ.diff(dq.c) + K*Φ.diff(dq.d))

>>> q_diff(xq*yq, xq)

$- 2 y + - 2 y_{i} i + - 2 y_{j} j + - 2 y_{k} k$

SympyExamples with matrix determinants

>>> m, n = symbols('m, n')

>>> A = MatrixSymbol('A', n, n)

>>> B = MatrixSymbol('B', n, n)

>>> P = MatrixSymbol('P', m, n)

>>> Q = MatrixSymbol('Q', n, m)

>>> Eq(det(A*B), det(A)*det(B))

$\text{True}$

Eq can be used to write down a symbolic equality.

MatMul._eval_determinant is expanding the determinant for us.

>>> Eq(det(A*B*A.inv()), det(B))

$\left|{A^{-1}}\right| \left|{A}\right| \left|{B}\right| = \left|{B}\right|$

>>> Eq(det(A*B*A.inv()), det(B)).simplify()

$\text{True}$

_eval_determinant gets us partway there, but doesn't finish the job.

simplify() can clean up.

Weinstein–Aronszajnidentity)

>>> I = Identity >>> Eq(det(I(m) + P*Q), det(I(n) + Q*P)) $\left|{\mathbb{I} + PQ}\right| = \left|{\mathbb{I} + QP}\right|$ >>> Eq(det(I(m) + P*Q), ... det(I(n) + Q*P)).simplify() NonSquareMatrixError: Det of a non-square matrix>>> I = Identity >>> Eq(det(I(n) + A*B), det(I(n) + B*A)) $\left|{\mathbb{I} + AB}\right| = \left|{\mathbb{I} + BA}\right|$ >>> Eq(det(I(n) + A*B), ... det(I(n) + B*A)).simplify() AttributeError: 'Mul' object has no attribute 'shape'

There is no MatAdd._eval_determinant to help here.

simplify() is ad-hoc, and sometimes crashes! Switching to square matrices makes things worse!

SympyWhat's going wrong?

Consider supporting a new algebra, MyAlg. We have two big hurdles:

Expression tree extension

It looks like we have to build new MyAlgAdd, MyAlgMul, … objects.

This scales poorly if we want to work with things like matrices of MyAlg; do we need MatMyAlgAdd e.t.c.?

If we get this wrong, things like simplify() fail if they expect MyAlgMul but get a Mul.

Supporting symbols

Defining our objects as structures with fields like we would in a conventional programming language isn't enough.

Expression tree objects needs to know how to simplify themselves.

This local simplification doesn't scale to things like $\operatorname{det}(1 + AB) = \operatorname{det}(1 + BA)$.

Are we sure our simplifications are mathematically sound?

What if our programming language could handle all of this?

Theorem provers

A proof assistant [or theorem prover] is a piece of software that provides a language for defining objects, specifying properties of these objects, and proving that these specifications hold. The system checks that these proofs are correct down to their logical foundation.

These tools are often used to verify the correctness of programs. But they can also be used for abstract mathematics [...]. In a formalization, all definitions are precisely specified and all proofs are virtually guaranteed to be correct.

What is a proof assistant? Lean community website

Theorem proversNotable implementations

- About a decade less mature than computer algebra systems (gray)

- Mizar (1973), Isabelle (1986), HOL Family (1988)

- Coq (1989), Metamath (1993), Agda (2007)

- Lean 1 (2013) & Lean 2 (2015)

Lean had the advantage of being born later and learning from past mistakes.

CICM 2020 slack Mario Carneiro, Lean Maintainer

- Lean 3 (2016)

- Actively maintained by the community (v3.24.0 to v3.35.1 in 2021)

- Can be used in-browser or in supported IDEs: VS Code and Emacs

- Lean 4 (202⍰)

- Better support for domain-specific syntax and custom automation

- Native code and its formal verification in one language

- In active development at Microsoft Research

Theorem proversDifferences with computer algebra systems

Computer algebra systems

Easier and faster to use

More of a focus of algorithms to solve specific problems

Sometimes tricky to extend

No strong guarantees of correctness

Theorem provers

Steeper learning curve

Focus on correctness; very small core

which requires trust.

Very extensible, as even things like equality, =, are built as extensions to the core!

Lean in Practice

Lean in Practice Definitions, Types, and Terms

-- name : type := value (or "term")

def two : ℕ := 2

def double (a : ℕ) : ℕ := two * a

-- syntax for `Exists (λ x : ℕ, eq (double x) 3)`

def unlikely : Prop := ∃ x : ℕ, double x = 3

lemma unlikely_proof : unlikely := sorry

lemma double_is_add_self (a : ℕ) : double a = a + a :=

by { dsimp [double, two], apply two_mul }

ℕ = nat.

mathematicians [have] lower tolerance for unintuitive interfaces than computer scientists.

CICM 2020 slack Mario Carneiro, PhD in Logic, Lean Maintainer

double is function that takes and outputs a ℕ.

It has type ℕ → ℕ.

A Prop is a mathematical statement.

Behind this nice syntax lies an expression tree

Prop instances are themselves Types.

Values are their proofs.

Note that a is analogous to a sympy.Symbol here

Arguments to functions and symbols in mathematical statements are the same thing!

double_is_add_self 1 is a proof that double 1 = 1 + 1

dsimp and apply are tactics,

to help us construct proofs interactively.

two_mul is a lemma from the library.

Lean in Practice class for abstraction

class for abstraction

class add_comm_group (A : Type)

extends has_add A, has_zero A, has_neg A : Type :=

(add_comm : ∀ a b : A, a + b = b + a )

(add_assoc : ∀ a b c : A, (a + b) + c = a + (b + c))

(zero_add : ∀ a : A, 0 + a = a )

(add_one : ∀ a : A, a + 0 = a )

(add_left_neg : ∀ a : A, -a + a = 0 )

add_comm_group is the name of our type-class.

A makes this a dependent type,

add_comm_group A is a collection of proofs that A forms an abelian group.

extends is inheritance.

Used here to provide +, 0 and - for A.

Theorems consume the API so as to generalize to all abelian groups...

lemma neg_add {A : Type} [add_comm_group A] (a b : A) :

-(a + b) = -a + -b := sorry

$\mathbb{H}$ and $\mathbb{R}^{m\times n}$ implement the API to prove they are abelian:

instance : add_comm_group ℍ :=

{ add_assoc := sorry, add_comm := sorry,

zero_add := sorry, add_zero := sorry,

add_left_neg := sorry }

instance : add_comm_group (matrix m n ℝ) :=

{ add_assoc := sorry, add_comm := sorry,

zero_add := sorry, add_zero := sorry,

add_left_neg := sorry }

Lean in PracticeExamples with matrix determinants

import linear_algebra.matrix.nonsingular_inverse

variables {R m n : Type} [comm_ring R] [fintype m] [fintype n] [decidable_eq m] [decidable_eq n]

variables (A B : matrix n n R) (P : matrix m n R) (Q : matrix n m R)

open matrix -- for `det`

open_locale matrix -- for `⬝`

example :

det (A⬝B) = det A * det B :=

by simp

⬝ is matrix multiply.

example : det (A⬝B⬝A⁻¹) = det B := sorryexample (hA : is_unit A) : det (A⬝B⬝A⁻¹) = det B := sorryexample (hA : is_unit A) : det (A⬝B⬝A⁻¹) = det B := by simp [hA]example (hA : is_unit A) : det (A⬝B⬝A⁻¹) = det B := by library_searchexample (hA : is_unit A) : det (A⬝B⬝A⁻¹) = det B := by exact det_conj hA B

We need a proof that A is invertible, spelt is_unit A.

simp takes us to a dead end.

library_search finds the proof.

example :

det (1 + P⬝Q) = det (1 + Q⬝P) :=

by simp

Lean doesn't know this result yet; let's prove it!

Lean in PracticeProving the Weinstein–Aronszajn identity

How do we prove $\operatorname{det} (1 + PQ) = \operatorname{det} (1 + QP)$ by hand?

/-- The Weinstein–Aronszajn identity -/

lemma matrix.det_one_plus_comm :

det (1 + P⬝Q) = det (1 + Q⬝P) :=

begin

calc det (1 + P⬝Q) = det (from_blocks 1 (-P) Q 1) : _

... = det (1 + Q⬝P) : _,

{ rewrite det_from_blocks_one₂₂,

rewrite matrix.neg_mul,

rewrite sub_neg_eq_add, },

{ rw [det_from_blocks_one₁₁, matrix.mul_neg,

sub_neg_eq_add] },

end

R : Type m : Type n : Type _inst_1: comm_ring R _inst_2: fintype m _inst_3: fintype n _inst_4: decidable_eq m _inst_5: decidable_eq n P : matrix m n R Q : matrix n m R ⊢ det (1 + P⬝Q) = det (1 + Q⬝P)P : matrix m n R Q : matrix n m R ⊢ det (1 + P⬝Q) = det (1 + Q⬝P)P : matrix m n R Q : matrix n m R ⊢ det (1 + P⬝Q) = det (from_blocks 1 (-P) Q 1) P : matrix m n R Q : matrix n m R ⊢ det (from_blocks 1 (-P) Q 1) = det (1 + Q⬝P)P : matrix m n R Q : matrix n m R ⊢ det (1 + P⬝Q) = det (from_blocks 1 (-P) Q 1)P : matrix m n R Q : matrix n m R ⊢ det (1 + P⬝Q) = det (1 - -P⬝Q)P : matrix m n R Q : matrix n m R ⊢ det (1 + P⬝Q) = det (1 - -(P⬝Q))goals accomplished 🎉⊢ det (from_blocks 1 (-P) Q 1) = det (1 + Q⬝P)goals accomplished 🎉

calc tactic. Each _ is a hole, leaving us with two goals (⊢).

We can use {} braces to focus on just the first goal.

Let's expand det (from_blocks ...) around the bottom right, ...

… push the negation outside the product, …

… and cancel the negations. We're done!

Now we can go back to the other goal. We can solve it the same way, …

… but let's write it more concisely.

How did Lean know det_from_blocks_one₁₁ and det_from_blocks_one₂₂?.

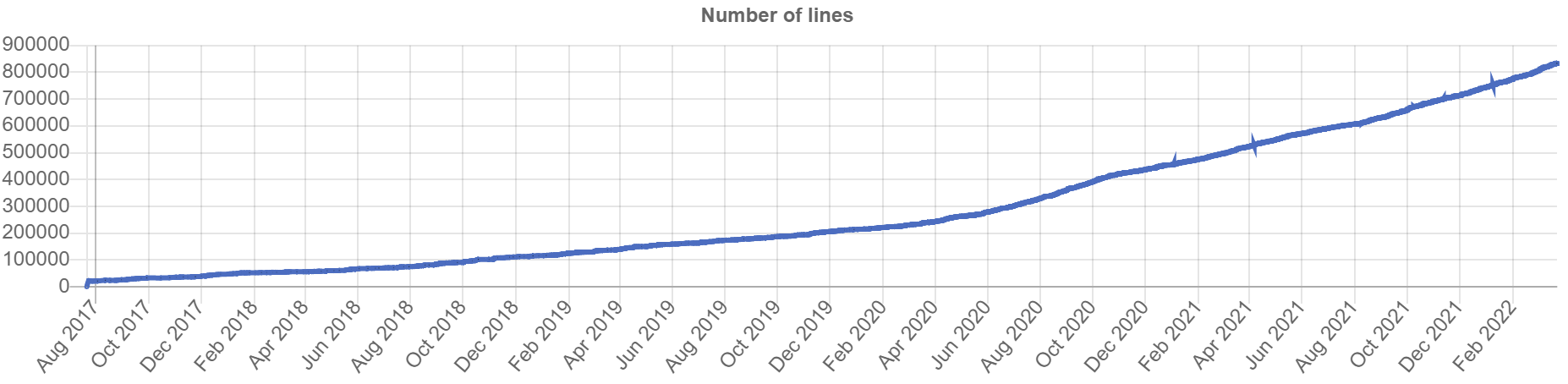

Mathlib

Mathlib

A mathematical library for Lean, comparable to:

- Coq's

mathematical components

- Isabelle's

HOL-Analysis

Curated by a diverse set of almost 250 maintainers and contributors:

- role: Professors, Post-docs, PhD students, Undergrads

- origin: USA, France, The Netherlands, UK, Canada, Australia, …

- expertise: Mathematics, Computer Science, Physics, AI, …

What does Mathlib know?

What does Mathlib know?Parseval's theorem

These amplitudes [$c_n$] can be related to the power content of the signal $g(t)$ over one period

$$\sum_{n=-\infty}^{\infty}|c_n|^2 dt = \frac{1}{T}\int_{0}^{T}|g(t)|^2 dt$$where $g(t) = \sum_{n=-\infty}^{\infty}c_ne^{jn\omega_0t}$

CUED IB Paper 6, Handout 2, Lent 2021 S. Godsill

The sum of the squared norms of the Fourier coefficients equals the $L^2$ norm of the function.

lemma tsum_sq_fourier_series_repr (g : Lp ℂ 2 haar_circle) : ∑' n : ℤ, ∥fourier_series.repr g n∥^2 = ∫ t : circle, ∥g t∥^2 ∂haar_circleFourier analysis on the circle Mathlib docs

Some translation required!

one period↔

t : circle

$c_n$↔

fourier_series.repr g n

$\frac{1}{T}dt$↔

∂haar_circle

(the measurearound a circle that sums to 1)

$g(t) = \sum \cdots$↔

g : Lp ℂ 2 haar_circle

(g is a function of type circle → ℂ, with finite $L^2$ norm)

What does Mathlib know?The Cayley-Hamilton theorem

The characteristic polynomial of $A$ is defined as \begin{align} p_{A}(\lambda)&=\det(\lambda I_{n}-A) \\ \textit{[...]}\quad&=\lambda^{n}+c_{n-1}\lambda^{n-1}+\cdots+c_{1}\lambda+c_{0} \end{align} One can create an analogous polynomial $p_{A}(A)$ [...]. The Cayley–Hamilton theorem states that $p_A(A) = 0$.

Cayley–Hamilton theorem Wikipedia

The characteristic polynomial of a matrix, applied to the matrix itself, is zero. This holds over any commutative ring.

lemma matrix.aeval_self_charpoly {R : Type} [comm_ring R] {n : Type} [decidable_eq n] [fintype n] (A : matrix n n R) : polynomial.aeval A A.charpoly = 0Characteristic polynomials and the Cayley-Hamilton theorem Mathlib docs

$p_A$↔

A.charpoly

$p(A)$↔

polynomial.aeval A p

Note that by [the] Cayley-Hamilton theorem, $e^{A\tau} = I\alpha_0(\tau) + \cdots + A^{n-1}\alpha_{n-1}$

3F2: Systems and Control, Lecture 4: controllability R. Sepulchre

Mathlib does not yet have this corollary, but it is close…

What does Mathlib know?Half an Undergraduate Mathematics Degree

What does Mathlib know?The algebra that I taught it

dual_number R-

Dual numbers: $R[\varepsilon]$ or $x + \varepsilon y$, where $\varepsilon^2 = 0$.

alternating_map R V W ι-

Alternating maps: $F : V^n \to W$ where $F(\ldots, v_i, \ldots, v_j, \ldots) = 0$ if $v_i = v_j$ for some $i \ne j$.

/-- det is an `alternating_map` in the rows of the matrix. -/ def matrix.det_row_alternating : alternating_map R (n → R) R n/-- If the arguments are linearly dependent then the result is `0`. -/ lemma alternating_map.map_linear_dependent [no_zero_smul_divisors K W] (f : alternating_map K V W ι) (v : ι → V) (h : ¬linear_independent K v) : f v = 0 clifford_algebra Q-

Geometric algebra, $\mathcal{G}(Q)$ (

Clifford algebra

to mathematicians)./-- The clifford algebra over `quaternion_Q` is isomorphic to `ℍ`. -/ def clifford_algebra_quaternion.equiv : clifford_algebra quaternion_Q ≃ₐ[ℝ] ℍ[ℝ]

What does Mathlib know?The algebra that I taught it

graded_algebra A-

Graded algebras: $A = \bigoplus_i A_i$ where $A_iA_j \subseteq A_{i+j}$

class set_like.graded_monoid (A : ι → S) : (one_mem : 1 ∈ A 0) (mul_mem : ∀ {i j : ι} {gi gj : R}, gi ∈ A i → gj ∈ A j → gi * gj ∈ A (i + j))/-- An internally-graded `R`-algebra `A` is one that can be decomposed into a collection of `submodule R A`s indexed by `ι` such that the canonical map `A → ⨁ i, 𝒜 i` is bijective. -/ class graded_algebra (𝒜 : ι → submodule R A) extends set_like.graded_monoid 𝒜 := (decompose' : A → ⨁ i, 𝒜 i) (left_inv : function.left_inverse decompose' (direct_sum.submodule_coe 𝒜)) (right_inv : function.right_inverse decompose' (direct_sum.submodule_coe 𝒜))/-- The clifford algebra is graded by the even and odd parts. -/ def clifford_algebra.graded_algebra : graded_algebra (clifford_algebra.even_odd Q) - Miscellaneous proofs

- The matrix lemma we proved in this presentation, and hundreds of smaller results in linear algebra, ring theory, and more.

What does Mathlib know?The algebra this audience wants to teach it?

- How can I learn to use Lean?

-

inductive mynat | zero : mynat | succ (n : mynat) : mynatIn this [in-browser] game, you get own version of the natural numbers, called

mynat, in an interactive theorem prover called Lean. [...] You're going to prove mathematical theorems using the Lean theorem prover.

In other words, you're going to solve levels in a computer game.The Natural Number Game Kevin Buzzard and Mohammad Pedramfar

More learning resources at leanprover-community.github.io/learn.

- What would be a challenging first project?

-

Formalize some first year example papers, with the help of the Lean user community at leanprover.zulipchat.com.

Pick something from the

Missing undergraduate mathematics in mathlib

list on the earlier slide.

What have other people built with Lean?

- Integration with Computer algebra software

-

We show how to make use of the Lean metaprogramming framework to verify certain Mathematica computations, so that the rigor of the proof assistant is not compromised

A Bi-Directional Extensible Interface Between Lean and Mathematica (2022) Robert Y. Lewis, Minchao Wu

- Bug-free machine learning systems

-

We generate a formal (i.e. machine-checkable) proof that the gradients sampled by the system are unbiased estimates of the true mathematical gradients.

Developing bug-free machine learning systems with formal mathematics (2017) Daniel Selsam, Percy Liang, David L. Dill

- Solving math olympiad problems automatically

-

Formal Mathematics Statement Curriculum Learning Stanislas Polu, Jesse Michael Han, Kunhao Zheng, Mantas Baksys, Igor Babuschkin, Ilya Sutskever

- Contributions to the edge of mathematical research

-

I now think it’s sensible in principle to formalize whatever you want in Lean. There’s no real obstruction.

— Peter Scholze, Fields MedallistProof Assistant Makes Jump to Big-League Math Quanta Magazine

References

SymPy: symbolic computing in Python (2017) Meurer A, Smith CP, Paprocki M, Čertík O, Kirpichev SB, Rocklin M, Kumar A, Ivanov S, Moore JK, Singh S, Rathnayake T, Vig S, Granger BE, Muller RP, Bonazzi F, Gupta H, Vats S, Johansson F, Pedregosa F, Curry MJ, Terrel AR, Roučka Š, Saboo A, Fernando I, Kulal S, Cimrman R, Scopatz A

Quaternionic analysis (1977) A. Sudbery

The Lean Theorem Prover (System Description) (2015) Leonardo de Moura, Soonho Kong, Jeremy Avigad, Floris van Doorn, Jakob von Raumer

The lean mathematical library (2020) The mathlib Community

Formalizing Geometric Algebra in Lean (2021) Eric Wieser, Utensil Song

Scalar actions in Lean's mathlib (2021) Eric Wieser

The lean community website: leanprover-community.github.io

These slides: eric-wieser.github.io/divf-2021

(these references, and the ones under all quote callouts in the slides, are clickable on the online version)